from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained(

"meta-llama/Llama-3.2-3B",

quantization_config = bnb_config,

trust_remote_code = True

).to(device)

tokenizer = AutoTokenizer.from_pretrained("meta-llama/Llama-3.2-3B")

from peft import prepare_model_for_kbit_training, LoraConfig, get_peft_model

lora_config = LoraConfig(

r=8,

lora_alpha=32,

target_modules= ["q_proj", "k_proj", "v_proj", "o_proj", "gate_proj", "up_proj", "down_proj"],

lora_dropout=0.05,

bias="none",

)

model = prepare_model_for_kbit_training(model)

model = get_peft_model(model, lora_config)

from trl import setup_chat_format

model,tokenizer = setup_chat_format(model,tokenizer)

from trl import SFTConfig, SFTTrainer

args = SFTConfig(

output_dir = "lora_model/",

per_device_train_batch_size = 4,

per_device_eval_batch_size = 4,

learning_rate = 2e-05,

gradient_accumulation_steps = 2,

max_steps = 150,

logging_strategy = "steps",

logging_steps = 5,

save_strategy = "steps",

save_steps = 25,

eval_strategy = "steps",

eval_steps = 5,

lr_scheduler_type = "cosine",

fp16 = True,

data_seed=42,

max_seq_length = 2048,

report_to = "none",

)

trainer = SFTTrainer(

model = model,

args = args,

processing_class = tokenizer,

train_dataset = dataset['train'],

eval_dataset = dataset['test'])

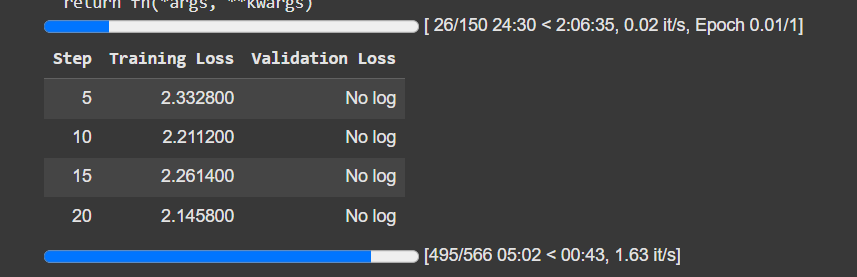

why am i getting no log for validation loss

1 Like

im working on conversational data, so i wont be able to create label_names

1 Like

Hmmm… I think I may have found a way to forcefully record it.