Dear community,

I am following OpenAI/HF cookbook to fine-tune gpt-oss:

Fine-tuning with gpt-oss and Hugging Face Transformers

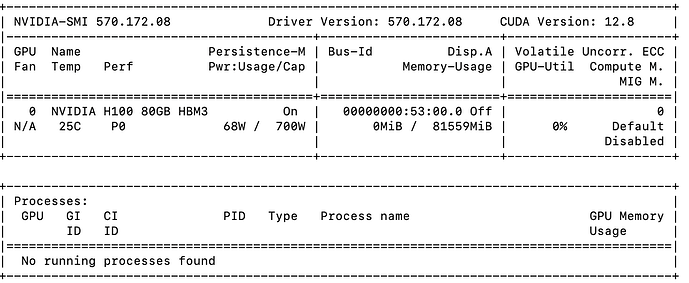

I tried training gpt-oss with A100 GPU that comes with 80 GB vRAM but got the below OOM error.

I already set my trl config as below to try to reduce memory pressure.

I appreciate your feedback.

training_args = SFTConfig(

learning_rate=2e-4,

gradient_checkpointing=True,

num_train_epochs=1,

logging_steps=1,

per_device_train_batch_size=4,

gradient_accumulation_steps=4,

max_length=1024,

warmup_ratio=0.03,

lr_scheduler_type=“cosine_with_min_lr”,

lr_scheduler_kwargs={“min_lr_rate”: 0.1},

output_dir=“gpt-oss-20b-multilingual-reasoner”,

push_to_hub=True,

)

from trl import SFTTrainer

trainer = SFTTrainer(

model=peft_model,

args=training_args,

train_dataset=dataset,

processing_class=tokenizer,

)

trainer.train()

trainer.train()

0%| | 0/63 [00:43<?, ?it/s]

Traceback (most recent call last): | 0/63 [00:00<?, ?it/s]

File “”, line 1, in

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/trainer.py”, line 2316, in train

return inner_training_loop(

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/trainer.py”, line 2674, in _inner_training_loop

tr_loss_step = self.training_step(model, inputs, num_items_in_batch)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/trl/trainer/sft_trainer.py”, line 1190, in training_step

return super().training_step(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/trainer.py”, line 4020, in training_step

loss = self.compute_loss(model, inputs, num_items_in_batch=num_items_in_batch)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/trl/trainer/sft_trainer.py”, line 1103, in compute_loss

(loss, outputs) = super().compute_loss(

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/trainer.py”, line 4110, in compute_loss

outputs = model(\*\*inputs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/nn/modules/module.py”, line 1775, in _wrapped_call_impl

return self.\_call_impl(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/nn/modules/module.py”, line 1786, in _call_impl

return forward_call(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/accelerate/utils/operations.py”, line 819, in forward

return model_forward(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/accelerate/utils/operations.py”, line 807, in _call_

return convert_to_fp32(self.model_forward(\*args, \*\*kwargs))

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/amp/autocast_mode.py”, line 44, in decorate_autocast

return func(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/accelerate/utils/operations.py”, line 819, in forward

return model_forward(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/accelerate/utils/operations.py”, line 807, in _call_

return convert_to_fp32(self.model_forward(\*args, \*\*kwargs))

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/amp/autocast_mode.py”, line 44, in decorate_autocast

return func(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/peft/peft_model.py”, line 921, in forward

return self.get_base_model()(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/nn/modules/module.py”, line 1775, in _wrapped_call_impl

return self.\_call_impl(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/nn/modules/module.py”, line 1786, in _call_impl

return forward_call(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/utils/generic.py”, line 918, in wrapper

output = func(self, \*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/models/gpt_oss/modeling_gpt_oss.py”, line 668, in forward

outputs: MoeModelOutputWithPast = self.model(

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/nn/modules/module.py”, line 1775, in _wrapped_call_impl

return self.\_call_impl(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/nn/modules/module.py”, line 1786, in _call_impl

return forward_call(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/utils/generic.py”, line 1064, in wrapper

outputs = func(self, \*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/models/gpt_oss/modeling_gpt_oss.py”, line 507, in forward

hidden_states = decoder_layer(

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/modeling_layers.py”, line 93, in _call_

return self.\_gradient_checkpointing_func(partial(super().\__call_\_, \*\*kwargs), \*args)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/_compile.py”, line 53, in inner

return disable_fn(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/_dynamo/eval_frame.py”, line 1044, in _fn

return fn(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/utils/checkpoint.py”, line 496, in checkpoint

return CheckpointFunction.apply(function, preserve, \*args)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/autograd/function.py”, line 581, in apply

return super().apply(\*args, \*\*kwargs) # type: ignore\[misc\]

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/utils/checkpoint.py”, line 262, in forward

outputs = run_function(\*args)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/nn/modules/module.py”, line 1775, in _wrapped_call_impl

return self.\_call_impl(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/nn/modules/module.py”, line 1786, in _call_impl

return forward_call(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/utils/deprecation.py”, line 172, in wrapped_func

return func(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/models/gpt_oss/modeling_gpt_oss.py”, line 371, in forward

hidden_states, \_ = self.self_attn(

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/nn/modules/module.py”, line 1775, in _wrapped_call_impl

return self.\_call_impl(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/torch/nn/modules/module.py”, line 1786, in _call_impl

return forward_call(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/utils/deprecation.py”, line 172, in wrapped_func

return func(\*args, \*\*kwargs)

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/models/gpt_oss/modeling_gpt_oss.py”, line 328, in forward

attn_output, attn_weights = attention_interface(

File “/home/user/.pyenv/versions/3.10.19/lib/python3.10/site-packages/transformers/models/gpt_oss/modeling_gpt_oss.py”, line 253, in eager_attention_forward

attn_weights = torch.matmul(query, key_states.transpose(2, 3)) \* scaling

torch.OutOfMemoryError: CUDA out of memory. Tried to allocate 512.00 MiB. GPU 0 has a total capacity of 79.25 GiB of which 401.88 MiB is free. Process 213371 has 78.85 GiB memory in use. Of the allocated memory 77.36 GiB is allocated by PyTorch, and 1011.53 MiB is reserved by PyTorch but unallocated. If reserved but unallocated memory is large try setting PYTORCH_CUDA_ALLOC_CONF=expandable_segments:True to avoid fragmentation. See documentation for Memory Management ( CUDA semantics — PyTorch 2.9 documentation )