when I am printing the output of ViTImageprocessor using Matplotlib I can see the same image is reduces in size and repeated 6 times in a frame of 224x224 with different contrast and intensity.

If I have understood correctly, ViT divides the image into patches. So the entire image should have been divided into small patches of size 16x16. But the result is not like that.

What is the issue here?

ViT takes in an input of resolution 224x224. The ViTImageProcessor just handles the resizing and normalisation of the image to that of the correct resolution.

The patches of 16x16 that you mentioned are taken over this processed image which the Vit model then consumes.

And additionally, if a an RGB image is what you gave to the ViTImageProcessor, an RGB image is what you must get with just sizes of 224x224 and 3 channels. Don’t know how you got 6 channel image.

This is my input image

This is my output after passing it through the ViTImageProcessor

I am visualising the processed image using matplotlib. Is that the issue?

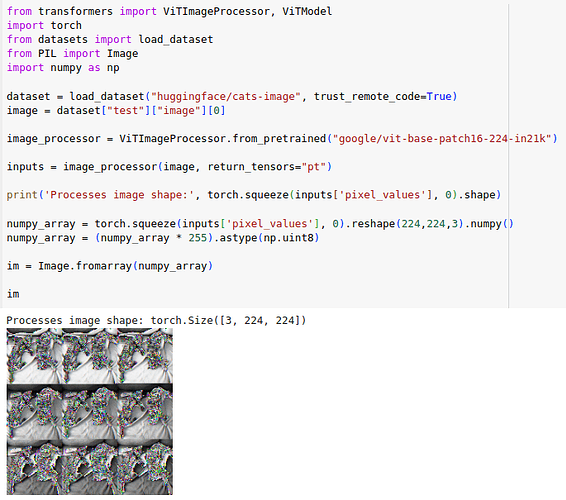

I don’t know what code you ran, but could you just post the output pixel_values after passing the image through ViTImageProcessor, removing/unsqeezing it’s batch dimension, and then get the image using PIL Image.fromarray function?

Hi,

Here’s how you can visualize the output of ViTImageProcessor:

from transformers import ViTImageProcessor

import requests

from PIL import Image

image_processor = ViTImageProcessor()

url = 'http://images.cocodataset.org/val2017/000000039769.jpg'

image = Image.open(requests.get(url, stream=True).raw)

pixel_values = image_processor(image, return_tensors="pt").pixel_values

# denormalize the pixel values for visualization purposes

mean = image_processor.image_mean

std = image_processor.image_std

unnormalized_image = (pixel_values[0].numpy() * np.array(std)[:, None, None]) + np.array(mean)[:, None, None]

unnormalized_image = (unnormalized_image * 255).astype(np.uint8)

unnormalized_image = np.moveaxis(unnormalized_image, 0, -1)

unnormalized_image = Image.fromarray(unnormalized_image)

which gives me this:

This is a 224x224 image.

This output you get after un normalizing. My question is after passing through the ViTImageProcessor why the image gets small and gets arranged in patches. You can refer the output which I have pasted.

I also came across this very question. And finally I figured out what was happening.

What is ViTImageProcessor doing? - #4 by raygx.

Check the last reply that I gave. You’ll know how to reconstruct the image.

@everyone