I’m getting the error “IndexError: tuple index out of range” when trying to run the LongformerforQuestionAnswering model on a custom dataset.

I’ve migrated everything over to Huggingface and am loading my dataset to pandas, then converting it to an HF Dataset using Dataset.from_pandas(). The columns are being tokenized using a PretrainedTokenizerFast in this function:

def prepare_train_features(examples, tokenizer):

tokenized_examples = tokenizer(

examples["question"],

examples["context"],

truncation="only_second",

max_length=2048,

return_overflowing_tokens=True,

return_offsets_mapping=True,

padding="max_length",

return_tensors="pt")

tokenized_examples["start_positions"] = [example["start_idx"] for example in examples]

tokenized_examples["end_positions"] = [example["end_idx"] for example in examples]

return tokenized_examples

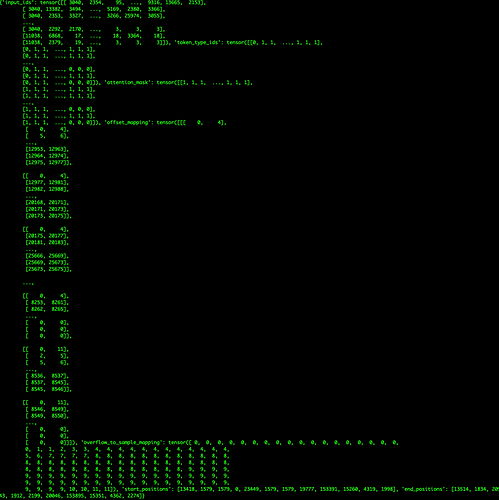

This returns the dataset which looks like the following image:

So everything appears to be correct. The problem occurs in the call to the model itself, the compute_loss step of the Trainer. When I print the value of inputs, it returns an empty tuple.

My training arguments are fairly standard:

training_args = TrainingArguments(output_dir="./models/trained_models",

evaluation_strategy="epoch",

save_strategy="epoch",

fp16=True,

label_names=["start_positions", "end_positions"],

ddp_find_unused_parameters=False,

per_device_train_batch_size=12,

per_device_eval_batch_size=12,

dataloader_num_workers=0,

weight_decay=0.01,

num_train_epochs=EPOCHS,

learning_rate=3e-5,

load_best_model_at_end=True,

metric_for_best_model='rouge',

do_train=True,

do_eval=True)

and I’m using the QuestionAnsweringTrainer provided in this repo:

All of this is in service of trying to use HF’s rouge metric (which didn’t seem to like my torch dataset)

Any help would be greatly appreciated!